AI versus… everything.

They say it’s inevitable. But you also have a say.

Hi! This is where Jeanine and I share tips from the Design Career Handbook to help you chart and navigate a successful career path. I also share perspectives on what’s happening in technology and design. If you’re looking for assistance on your journey, you can book a session on ADPList, or we can discuss 1:1 coaching. - Kevin F

A few weeks ago, I caught the documentary The AI Doc: Or How I Became an Apocaloptimist at my local theater with Jeanine and a couple of our friends. I wrote on LinkedIn that I found it to be informative and thought-provoking. It was well-made, presenting significant perspectives on all sides of the AI debate → what it can do for humanity vs. the existential risks that it may pose. I was surprised by many notable figures participating in the film, including:

Sam Altman, CEO of OpenAI (ChatGPT)

Dario Amodei, CEO of Anthropic (Claude)

Daniela Amodei, President of Anthropic (Claude)

Demis Hassabis, CEO of Google DeepMind

Ilya Sutskever and Jan Leike, prominent AI safety and risk researchers

Karen Hao, author of the must-read book Empire of AI

I’m sure I missed a bunch of other important names. Notably, Mark Zuckerberg and Elon Musk were invited to participate, but declined and/or backed out.

The documentary was directed by Oscar-winning filmmaker Daniel Roher and Charlie Tyrell. Roher provided a very human, emotional throughline by revealing that he and his wife, Caroline (also a filmmaker), were expecting their first child. They openly worry about the world their child will grow up in. Their feelings of uncertainty weave through the hypotheses, concerns, and chats with numerous subject-matter experts.

The takeaway is that AI isn’t going away. Which means we all must educate ourselves and participate in the discourse on what place it should have in our lives. In the very least, I implore you to research the issues, the companies supplying AI technologies, how institutions are employing them, and the legal and governmental stances on regulating them. You can start by checking out the documentary’s trailer.

Now, I joke all the time about not being an expert, yet I seem to be reading about, talking about, and experimenting with AI nearly every single day. That’s because I’m just like you—trying to figure out where it fits into my daily routine, as well as my hopes and dreams for the future. (Yes, I typed that em dash, and every other word in this post.) Another thing I’m clear about is that I’m a technologist. Throughout my career, I’ve been imagining, using, and building all kinds of tech that enabled me, my peers, and others to do things we couldn’t do before. With the comparisons to previous technological revolutions, I’m still on the side of: “But this is different.”

It’s clear to me, and many others, that the impact of AI is much more widespread and happening faster than anything that’s come before. Matt Shumer, who’s been working and investing in the AI space for six years, wrote an article in February called “Something Big Is Happening.” It was viewed over 30 million times within a week, garnering coverage by mainstream media. Schumer said he wrote it for his parents and family to describe how rapidly AI models are advancing and how big an impact they will have across all sectors of industry and jobs.

A few of the extreme views:

I’m not interested in using AI. ←→ I’m not using AI enough.

It takes too much time to learn AI. ←→ AI is enabling me to do 10x more things.

AI cannot do my job. ←→ AI is going to take my job.

I hate AI slop. ←→ This is the worst it’s going to be. It’s getting better every day.

AI is ripping off creators. ←→ I’m an artist now.

Having vibe-coded an app myself, I can attest to the dopamine hit that’s been compared to the addictive qualities of gambling. My app was a personal experiment, but tons of people, organizations, and friends of mine have taken a positive view of the practice, even the Human Rights Foundation. I sometimes have extreme luddite-inspired days, wanting to unplug and shake my fist at it all. But then I follow my own advice. The advice I give to everyone I mentor and coach, and the advice Jeanine gives to all her students. That is, we must experiment with it firsthand, talk to others who are doing the same, and decide for ourselves.

I’m still hopeful for some cool breakthrough, preferably in battling disease or reversing climate change. I’m dumbfounded that people are using it for creative endeavors. I’m unclear about the joy in (predominantly) using AI to create art, fiction, or film. I don’t think AI-generated work is “owned” by the creator, and it seems that, since the Supreme Court declined to hear the case, the standing US court ruling is that AI-crafted work cannot be copyrighted. You may have caught my caveat above; I think we’re all wading through the question of how much AI assistance we believe is appropriate. Is using it for research okay? How about summarizing our thoughts or clarifying our ideas? What about generating ideas? It’s up to each of us to decide.

There’s so much being reported about AI that it’s hard to keep up. It can be all-consuming and overwhelming. Here are the types of headlines that catch my attention and help me form my own opinions.

People are mixed on it, but this significant MIT study sparked a conversation about ChatGPT’s impact on our brains. Cognitive neuroscientist Dr. Caroline Leaf is among many who say AI is rewiring our brains. Psychologists, sociologists, and researchers continue to weigh in.

Top engineers at Anthropic and OpenAI have indicated that “pretty much” 100% of the code on their respective platforms is now written by AI. (For non-techies: AI is building the next version of itself.) Tech companies are saying this is how software is built now.

There are spikes of uproar over how AI is impacting journalism. Major news outlets have been criticized for a rapid decline in the quality and/or integrity of their reporting on events, issues, interviews, and even book reviews.

There have been multiple reports in recent years of lawyers citing “hallucinated” court cases, facts, or quotes. A few have had to pay fines, been disqualified, and even suspended from practicing law.

A few “authors” (writing under pen names) have bragged about churning out hundreds of books using AI. As a pure money-making scheme, I get it. As a prolific reader, I'm bummed about this. Do fans of genre writing even care if books are AI-generated? And in the off chance that you didn’t know, dear reader, those AI-generated books are only possible because the AI companies pulled off the biggest content heist ever, using millions of pirated books to train their models (OpenAI, Anthropic, Meta, Google, and even Apple have all been implicated).

I saved the best (actually the worst) for last: AI’s impact on education. I remember the early position that “AI would level the playing field.” Of course, there was something to that. But there are as many voices today speaking about severe cognitive decline happening rapidly due to AI use. There are countless anecdotes from educators about the precipitous drop-off in attention spans, a lack of focus beyond short periods, and the need to compromise on learning objectives and simplify materials to help “students to pass.” On one hand, I’ll openly say that education reform is long overdue, and the entire academic system seems ill-equipped to evolve as quickly as the tech world demands. On the other hand, I’m occasionally encouraged when I hear of students and academic institutions discussing the issues and experimenting together.

Clearly, I’m mostly pointing out the negative aspects of AI. But that’s the point, we are easily enticed by shiny new things. We lack concern about long-term consequences and overlook horrific “one-off” events. As a comparison, it’s hard to argue that we’re unaware of the mental health harms of social media, but we’re not going to stop using it altogether. (BTW: Meta and Google are in court for intentionally engineering addiction in minors.) We need to look at all sides of the equation, sooner rather than later. Be informed so you can make the best decisions for yourself.

What can you do?

Follow prominent voices and respected news and research covering AI topics. Spend a little time learning about the companies behind the tools that you use. Make an effort to use the ones that most align with your values.

Read Empire of AI by Karen Hao, which provides details on how these AI technologies came about, including the geopolitical, environmental, ethical, governmental, and, of course, financial implications of it all.

Watch The AI Doc: Or How I Became an Apocaloptimist if you see it playing near you. Hopefully, it will be released on a streaming platform eventually.

The tech community and leaders of various AI companies have urged or, at least, expressed an openness to regulation. It’s up to the public to demand it of our local governments. The film’s website lists resources on its Global AI Action Plan page to help you get informed and involved.

Especially for students:

Don’t automatically accept output from AI platforms as the most correct, ideal, or best. Researchers call this “cognitive surrender,” and indicate that a large majority are “readily incorporating AI-generated outputs into their decision-making processes, often with minimal friction or skepticism.” [I bolded that last bit.]

Verify facts and cite sources to minimize the risk that you are copying or plagiarizing someone else’s work - whether visual art, design, or writing.

You are not learning if you are simply regurgitating AI output. The world needs your voice and perspective. That means sitting with the information you are absorbing, noodling it around in your brain, making fresh connections, and forming your own opinions.

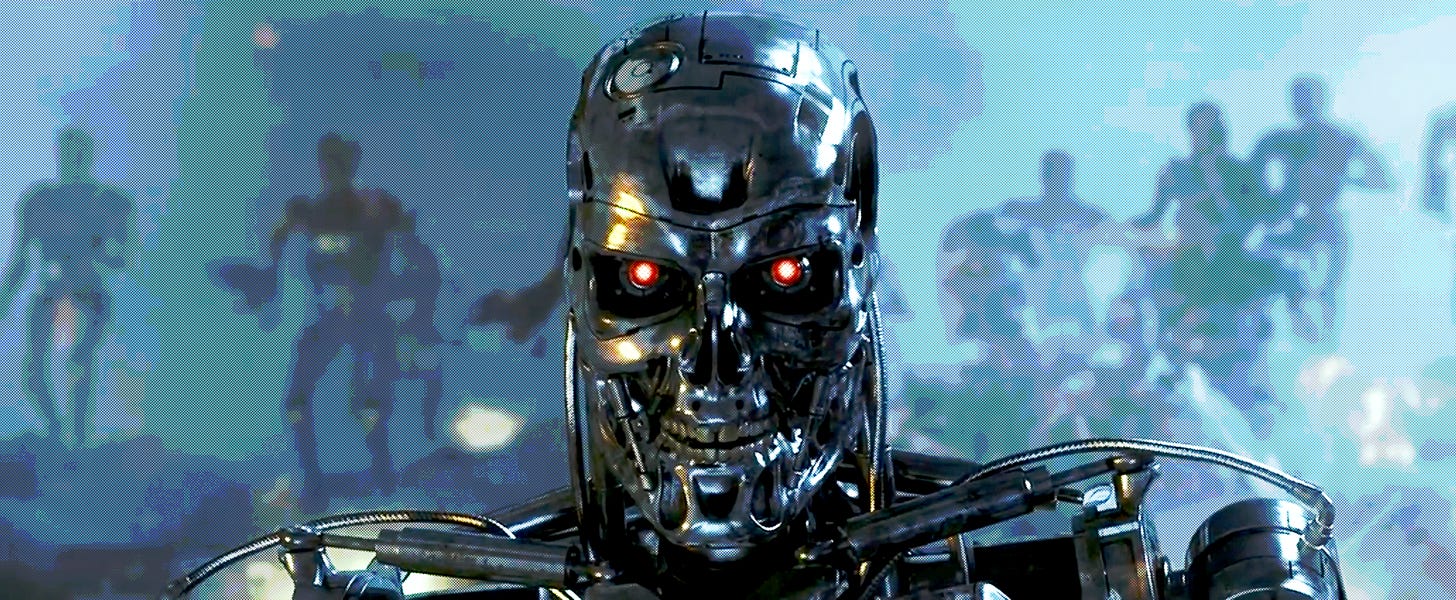

Don’t leave your critical thinking to the robots, or we’ll end up here, or here, or here, or here, or here, or here… Okay, I’ll stop, already.

Top image: Cameron, James (Director). The Terminator. Hemdale Film Corporation; Pacific Western Productions; Orion Pictures, 1984.

Since last time:

My book about career resilience and reinvention is coming along. I’ve completed a first draft and am in the editing phase. More to come! 😅

In case you missed it: It was an honor to be interviewed on the incredible AIGA Design Podcast with Lee-Sean Huang and Giulia Donatello. We dug into the usual hot topics from adaptability in design careers, the evolving role of design leaders, and the importance of personal branding. Of course, we had to touch upon AI. Listen to the podcast or watch the video. 🎧

Our book would make an excellent gift for any designer! It has job search, interview, and portfolio advice, plus plenty of tips for working designers to level up.

The Design Career Handbook: Everything You Need to Know to Get a Job and Be Successful is available in paperback and ebook formats on Amazon and Barnes & Noble online.